After Action Report: Anatomy of a Social Engineering Engagement and Picking Up the Pieces

We get a lot of folks asking us about our SE engagements. While we can’t share too much specific information such as video or details about individuals without compromising client confidentiality, we can talk about our experiences and what we and our clients have learned. A while back we were contracted by one particular company to conduct a full-blown social engineering engagement; this included phishing, spear-phishing of executives, vishing, and onsite impersonation. Imagine our excitement at working with a company genuinely concerned about a comprehensive security program! While this after action report is NOT a breakdown of this company’s vulnerabilities, it is a quick overview of what we were able to accomplish and things that can be done to mitigate the social engineering threat.

Step One: Snooping

What can a little Internet snooping tell an attacker? Most of you know that the answer is just about everything about you and even more, especially if you’re on social media. Many people are just plain sociable. And even if you aren’t, you probably have a friend or family member who is. You may be extremely careful about releasing personal information. But if you have a mother that is incredibly proud of her babies and wants to share, this may be a vector that’s hard to lock down. ABC recently ran a story in which it was estimated that 1 in 40 children under the age of 18 have already had their identities stolen, much of that due to social media. Ouch. The worst my mom ever did was haul out embarrassing baby pics.

Yeah. Not really me, by the way.

In some ways, companies have an even harder time. They are actually required to communicate – with customers, employees, partners, and the world in general. So they need to be able to balance effective communications with TMI. Unfortunately, even the most innocuous information is a potential attack vector for a skilled social engineer.

The final factor is a giant open source monster. Sites like Spokeo do nothing but aggregate information on people and other entities. Google Earth will give anyone who queries a picture of your front doorstep. We leave “digital exhaust” not just by what we do, but by neglecting to monitor and take action on what’s out there.

The online investigation of our client revealed a number of things, sight unseen. We knew exactly what their corporate buildings looked like from the street, down to where the employees took their smoke breaks. We also collected a lot of information about the company in general as well as their executives who would later be targets of a spear phishing campaign. We continued our engagement as prepared as we could be.

Step Two: Casting the phish

How juicy does the bait have to be to catch people? If a message can incite a little emotion, chances are good that the recipient may click. How would you feel if your company wanted to block all access to social media? In this particular democratic organization, recipients were allowed to login and voice their opinions on the potential policy. To add a little heat, they were only given access to the “feedback website” for 24 hours. As it turns out, 51% of the organization wanted to be heard. Assuming this sample reflected the company as a whole, half of the organization would have unwittingly fallen victim to this type of phish.

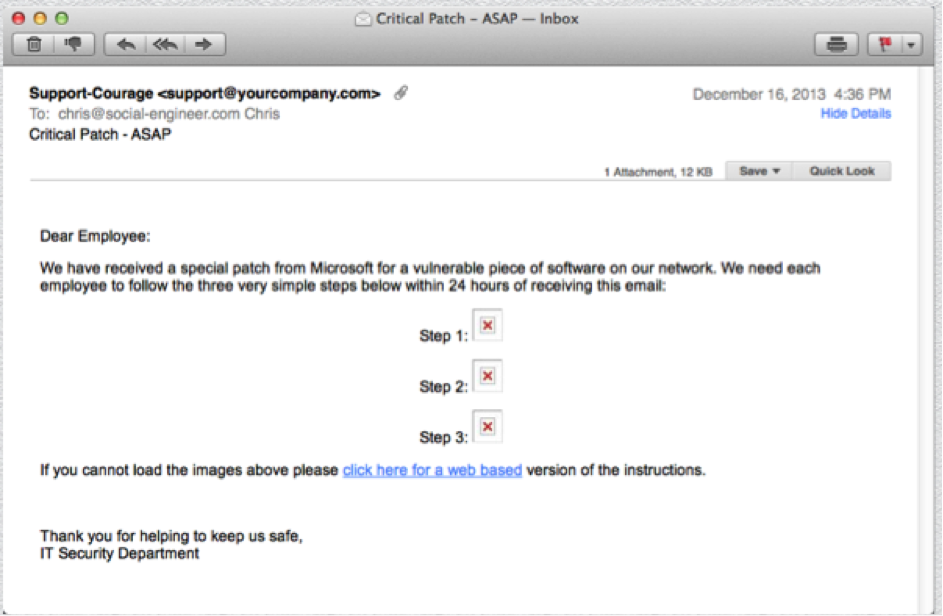

After education and about two weeks, we made a second attempt at this same population with a much harder phish. Chris is especially proud of this one, so I’ll share it with you:

Everyone has probably seen the little red X’s that indicate an image can’t load. Pretty sneaky, Chris. Despite that, this group made a huge improvement, with 21% of recipients clicking the link. That’s after only about a total of 3 weeks and 2 very short training sessions. What our client learned from this very brief experiment is that proper education works – imagine their results after this type of education becomes a regular aspect of their security program.

Step Three: Spearing big phish…sorta…

Anyone following the news knows that spear phishing is a big deal. This is a very targeted form of phish in which an attacker has conducted research on a specific individual and crafted a message that’s hard to detect as illegitimate and even harder to resist. If you send me a LinkedIn request, I’ll probably ignore it. But a link to BaneCat? Slam dunk.

Our client identified 14 C-level individuals in their company to be “participants” in our spear phishing campaign. They were so designated because of their significant access to corporate data or resources. In conducting our research, we found a plethora of information about these folks’ likes, interests, and activities. The point here is not that we’re super l33t. Rather, it’s the fact that most people will simply hand over information by virtue of the fact that they’re not locking it down. Everything we found was through investigation using open source information. We were pretty sure we nailed this one.

After creating 14 very personal emails and launching our campaign, we waited. And waited. We even had our POC check and make sure the emails were received. Much to our surprise, however, we only had 3 clicks by the end of the campaign. One of those was by a person who was forwarded the email, not a part of the original 14.

We then conducted an investigation with our client and discovered something very cool. These 14 individuals had assistants who were responsible for personally screening their exec’s email, determining whether the email was solicited and/or from a known sender, and filing them appropriately. It was the human element that accounted for the client’s overwhelming success in this portion of the assessment. Does this mean that we should all get assistants? Although that would be awesome, the real answer is not at all; with just a little extra training and awareness, anyone can become proficient at identifying messaging that doesn’t quite ring true.

Step Four: Singing telegram!

Next, Chris and I packed our dark glasses and super-spy cameras and headed to the client’s locations. Four buildings, three days, two states, no sleep. This particular client faces some big challenges when it comes to physical plant security, not the least of which is sharing buildings with other companies and retailers open to the general public. Despite having a great physical security team and RFID badging, we were able to gain access to most of their secured locations pretexting as inspectors and yes, a singing telegram (I’ll let you guess who got to do that one). We didn’t really need to do a lot of sneaky stuff; we took advantage of high traffic times and locations, acted like we belonged there, and exploited people’s general helpfulness. Using these principles, we accessed areas such their corporate mailroom, NOC, and executive offices and roamed freely without ever being stopped.

Despite our success, there is an important take-away. There was one building we absolutely could not breach. This location was controlled by a single individual, a courteous and mild-mannered young man we’ll call Ted. Ted knew his job. He understood the parameters under which he was to allow us entry. He knew we didn’t meet those parameters, by a long shot. We asked nicely. We joked. We smiled. We cajoled. We showed him our fake work orders, business cards, and badges. We didn’t threaten or cry, but I have a feeling that wouldn’t have worked, either. Ted simply calmly and courteously denied us entry, so sorry, sir/ma’am. If there were more Teds in the world, we’d be out of a job. I think the points here are that 1) Ted understood the rules, 2) Ted was comfortable saying “no” (reference SEORG Newsletter Vol 4, Issue 51), and man, 3) Ted never broke a sweat.

Step Five: The President, line one

The last part of our campaign was voice elicitation (vishing). The purpose was to test the effectiveness of corporate policies regarding the release of sensitive information. To that end the client identified various pieces of data that our team attempted to obtain. In order to accomplish this testing, we did a couple things. We spoofed telephone numbers so they appeared to be originating from within the corporate network. Then we impersonated corporate staff from either IT support or HR. Those of you who follow us regularly know what these two simple things did for us 1) it made us “safe” as we were clearly members of the tribe, and 2) it gave us a sense of authority based on our apparent roles within the company. Both of these factors improved our chances of obtaining cooperation. Added to this, we took advantage of the fact that it was close to a major holiday, meaning people would likely be in a hurry to get through their days and on to more enjoyable things. I recall I even reached a woman who was in her car on the way to a family gathering. She was in an extremely good mood, and extremely cooperative in providing the information I requested.

After the smoke cleared, the numbers spoke for themselves. 73% of people contacted confirmed their email addresses and provided corporate ID numbers. 47% provided their dates of birth and SSNs. Only 7% responded with a hard shutdown.

One final thing to think about. How many of these folks went home that night and thought, “Huh, I really gave out a lot of personal information today to a person I don’t know”? If we did our jobs properly, hopefully not many, if any at all. At most, they went home muttering about the inefficiencies of the corporate network or HR system, or maybe (I like to think) how nice it was to speak to a friendly voice from IT or HR. The professional social engineer does NOT want to draw attention to themselves – remember, the malicious attacker may make multiple attempts on the same target, being satisfied with small bits that eventually become a large piece of information on an organization or individual.

The wrap up

Overall, this was an excellent engagement. The client learned first and foremost that they had their foundational pieces in place. They had supportive management, excellent technical controls and good policies. But what they also found was that their people managed to get around these controls in ways that were potentially risky to both the company and themselves. We left them with their work cut out for them and we’ll see how they’ve improved this year.

For those of you reading this and thinking of your own organization, we offer these three main takeaways:

1. Take defensive action: Organizations are responsible for letting their people know how to handle information and resources on both a corporate and personal level. They also need to have clear guidelines on what actions to take if and when something bad happens. The last thing you want is an employee who clicks on a malicious email and simply deletes it out of fear or lack of knowing what to do.

2. Conduct realistic pentesting: A network pentest is about half of what you need to be secure. Technology simply can’t keep you safe all the time. Make sure you understand your true vulnerability to human-based attacks by having social engineering pen tests conducted by people who truly understand the field.

3. Promote constant security awareness: Everyone hates annual canned security training. More importantly, checking the compliance blocks doesn’t mean you’re safe. If you make your training practical, relevant and fun (yes, fun training), you can go a long way to creating a culture that thinks critically about how information and resources should be shared, stored, and managed.

Ok, I’m off my soapbox. Get out there and make your companies safe. If you’re interested in a condensed version of the three points above, you’ll find this and more on our infographic, located here. Take care and stay safe.

Written by Michele Fincher

Sources:

https://abcnews.go.com/Business/proud-moms-compromise-kids-identities/story?id=23567818

https://www.youtube.com/watch?v=5ywjpbThDpE

https://www.social-engineer.org/newsletter/Social-Engineer.Org%20Newsletter%20Vol.%2004%20Iss.%2051.htm/

https://www.social-engineer.org/social-engineering/social-engineering-infographic/

https://whnt.com/2018/10/01/fraud-summit-held-in-huntsville-officials-warn-against-caller-id-spoofing/

Comments are closed.